How to Build an AI Strategy: A Step-by-Step Framework

In 2023, a $4 billion insurance company launched twelve AI initiatives simultaneously. Chatbots for claims. Machine learning for underwriting. Computer vision for damage assessment. NLP for policy review. Generative AI for marketing content. Twelve teams, twelve budgets, twelve vendor contracts.

Eighteen months later, two projects were in production. Three had been quietly shelved. Four were stuck in pilot purgatory — technically working but never approved for scale. The remaining three were still in development, behind schedule, and over budget. The company had spent $23 million on AI with almost nothing to show for it.

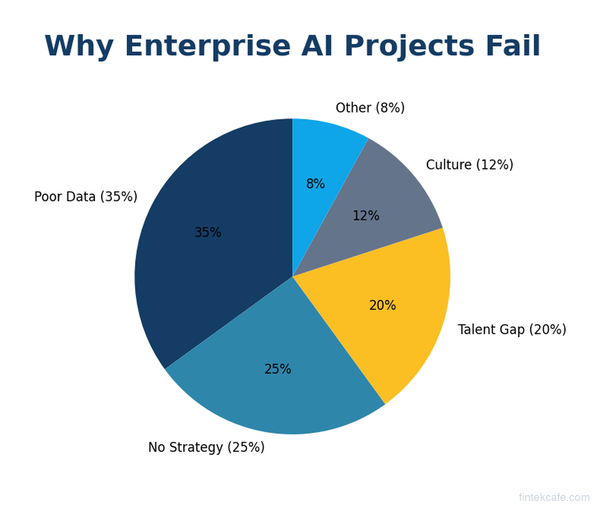

This isn't unusual. McKinsey's 2025 survey found that only 11% of companies have scaled AI beyond pilot projects. The problem is rarely the technology — it's the absence of strategy. Companies buy AI tools, hire data scientists, and launch experiments without a clear framework for deciding where AI should go, how to evaluate results, and when to scale or kill initiatives.

This guide provides that framework. It's a step-by-step process for building an AI strategy that produces results, not just pilots — designed for executives who need to make decisions about AI investment, not engineers debating model architectures.

Before You Start: The AI Strategy Maturity Model

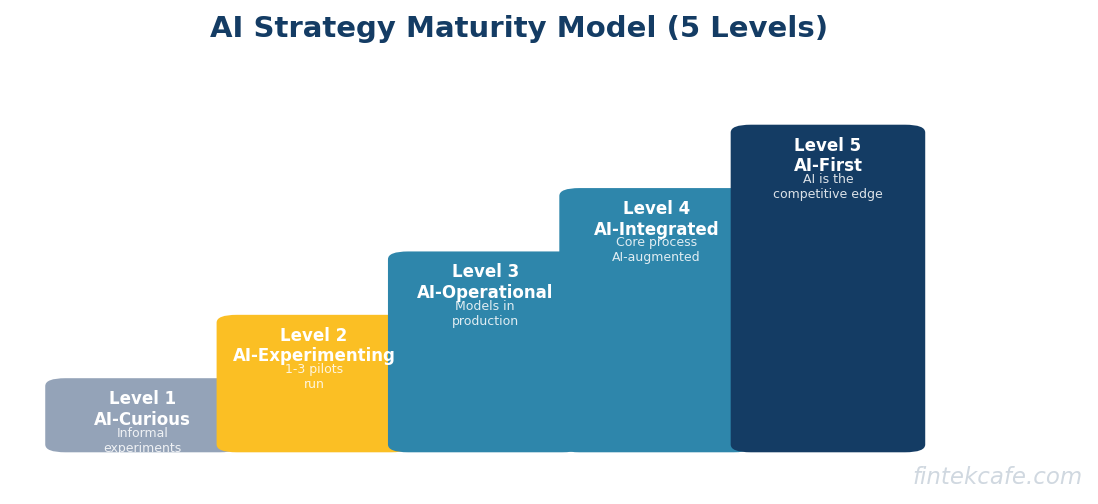

Strategy must match maturity. A company that has never deployed a machine learning model needs a different approach than one that already runs AI in production. Before building your strategy, honestly assess where you are.

Level 1: AI-Curious

Description: Leadership is interested in AI. There may be informal experiments — a data scientist running models in Jupyter notebooks, a team testing ChatGPT for drafting emails. Nothing is in production. No formal AI budget exists.

Your priority: Education and a single pilot project. Don't build a strategy for a company that doesn't yet understand what AI can and can't do. Run one small experiment, learn from it, then plan.

Level 2: AI-Experimenting

Description: The company has run 1-3 AI pilots. Some have shown promising results. There may be a small data science team. Leadership is asking "what's next?" but there's no coordination across teams — AI efforts are siloed.

Your priority: Consolidate lessons from pilots, create a cross-functional AI steering committee, and prioritize the next 2-3 use cases based on business impact. This is where most companies are in 2026.

Level 3: AI-Operational

Description: Multiple AI models are in production. There's a dedicated AI/ML team. Infrastructure exists for model training, deployment, and monitoring. The challenge is scaling beyond individual use cases to systematic AI adoption.

Your priority: Governance framework, model monitoring, build-vs-buy standardization, and an AI roadmap aligned to business strategy. Focus on industrializing AI, not just experimenting.

Level 4: AI-Integrated

Description: AI is embedded in core business processes. Decisions in credit, pricing, marketing, and operations are augmented or automated by AI. There's executive-level AI leadership (Chief AI Officer or equivalent). The organization understands AI's limitations as well as its capabilities.

Your priority: Competitive differentiation through proprietary AI capabilities, AI ethics and governance at scale, and exploring frontier technologies (agents, multimodal AI, on-device AI).

Level 5: AI-First

Description: The company's competitive advantage is fundamentally driven by AI. Business model, product design, and organizational structure are optimized around AI capabilities. Examples: Google, Tesla's Autopilot, Two Sigma, Palantir.

Your priority: Maintaining and extending the AI advantage while managing concentration risk on AI capabilities that could be commoditized.

Most companies reading this are at Level 1 or 2. The framework below is designed primarily for those stages, with guidance for advancing to Level 3.

Step 1: Define the Business Problem, Not the AI Solution

The single most common mistake in AI strategy is starting with the technology. "We need a chatbot." "Let's use machine learning for X." "Everyone's using generative AI, we should too."

Start with business problems instead.

The Business Problem Audit

Gather your leadership team and answer these questions:

- Where do we lose the most money? Fraud, errors, inefficiency, customer churn, bad pricing decisions.

- Where do we spend the most human time on repetitive tasks? Data entry, document review, report generation, customer query triage.

- Where are our decisions limited by the speed or scale of human analysis? Pricing thousands of products, monitoring millions of transactions, personalizing experiences for millions of customers.

- Where do our competitors have an advantage that appears to be data or automation-driven?

Each answer is a potential AI use case. But not every problem needs AI — some are better solved by better processes, better software, or more people. AI is the right tool when:

- The task involves pattern recognition across large datasets

- Speed or scale exceeds human capability

- The cost of errors is lower than the cost of doing it manually

- Sufficient historical data exists to train or evaluate models

Example: Insurance Claims

Business problem: Claims processing takes 14 days on average. Customers are dissatisfied. Competitors process simple claims in 48 hours.

Analysis: 60% of claims are straightforward (clear liability, standard damage, documented costs). These take 14 days because they wait in the same queue as complex claims.

AI opportunity: Auto-triage and fast-track simple claims using ML classification. Route complex claims to senior adjusters. Target: reduce simple claim processing to 3 days.

Not an AI opportunity: The 14-day timeline for complex claims is driven by investigation requirements and negotiation — tasks where human judgment is essential. AI can assist (document extraction, fraud pattern detection) but can't replace the adjuster.

Step 2: Prioritize Use Cases With the Impact-Feasibility Matrix

You'll generate more AI opportunities than you can pursue. Prioritize ruthlessly using two dimensions.

Business Impact

Score each opportunity on:

- Revenue potential: Will this increase sales, improve pricing, or reduce churn?

- Cost reduction: Will this reduce labor costs, error costs, or processing time?

- Strategic value: Does this build a competitive advantage or create a data asset?

- Customer experience: Will customers notice and value the improvement?

Technical Feasibility

Score each opportunity on:

- Data availability: Do you have sufficient, high-quality data to train or evaluate models?

- Technical complexity: Is this a well-understood ML problem (classification, regression, NLP) or a research-grade challenge?

- Integration difficulty: How deeply does this need to integrate with existing systems?

- Regulatory constraints: Are there compliance requirements (explainability, bias testing, audit trails) that add complexity?

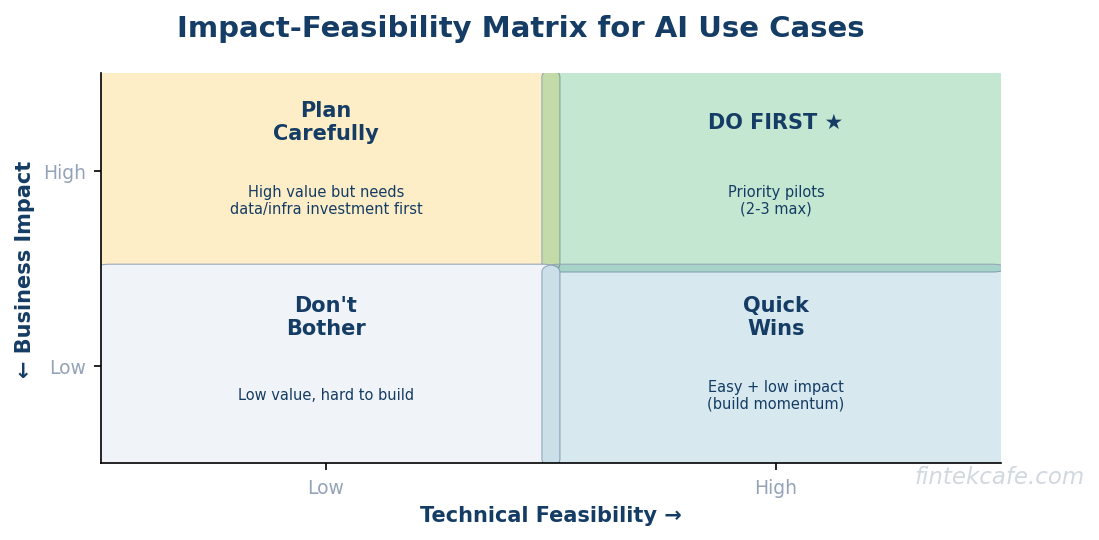

The Four Quadrants

| High Feasibility | Low Feasibility | |

|---|---|---|

| High Impact | Do first. These are your priority pilots. | Plan carefully. High value but needs investment in data or infrastructure first. |

| Low Impact | Quick wins. Do these if resources allow. Good for building momentum. | Don't bother. Low value and hard to build. |

Practical advice: Start with 2-3 opportunities in the "Do first" quadrant. Resist the temptation to launch more than three initiatives simultaneously. The insurance company that launched twelve projects at once failed not because the ideas were bad, but because attention, talent, and data infrastructure were spread too thin.

Step 3: Run a Structured Pilot

A pilot is not a proof-of-concept. A PoC asks "can this work technically?" A pilot asks "does this work in our business, with our data, at our scale, with our people?"

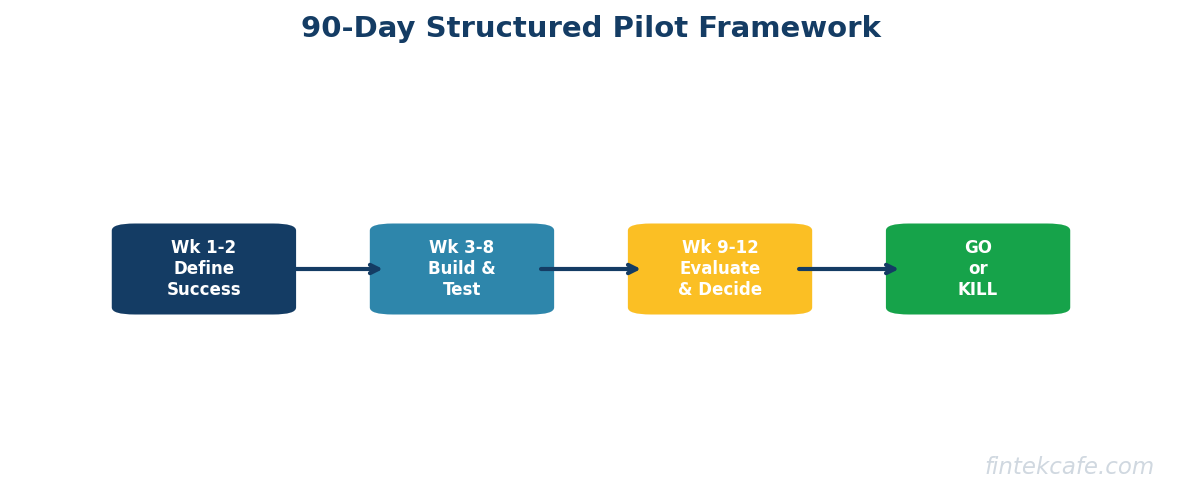

The 90-Day Pilot Framework

Weeks 1-2: Define Success Criteria

Before writing a line of code, define:

- The baseline metric. What is current performance? (Claims processing: 14 days average)

- The target metric. What does success look like? (Claims processing: 3 days for simple claims)

- The minimum viable improvement. Below what threshold is this not worth pursuing? (If we only get to 10 days, it's not worth the investment)

- The measurement method. How will you measure? (Sample of 500 claims, A/B test against current process)

Weeks 3-8: Build and Test

- Use real data, not synthetic data. Models trained on clean, curated datasets often fail on messy real-world data.

- Build the simplest model that could work. Start with logistic regression or decision trees before jumping to deep learning. Simple models are easier to debug, explain, and maintain.

- Test with actual users. Put the AI output in front of the people who will use it and watch what happens. Do they trust it? Does it change their workflow? Where does it fail?

Weeks 9-12: Evaluate and Decide

- Compare against the baseline. Did the pilot hit the target metric? The minimum viable improvement?

- Calculate the full cost. Include engineering time, data preparation, infrastructure, ongoing maintenance — not just the API bill.

- Assess the human factors. Are users adopting it? Are they overriding the AI's decisions? If so, why?

- Make a go/no-go decision. Scale, iterate, or kill. No pilot purgatory.

The Kill Criteria

Define in advance the conditions under which you'll kill the pilot. This prevents the sunk cost fallacy from keeping failed projects alive.

Kill the pilot if:

- Performance is below the minimum viable improvement after 90 days

- Data quality issues are fundamental (not fixable with more cleaning)

- User adoption is below 30% after training and support

- The cost per unit of improvement exceeds the value created

Step 4: Build or Buy?

Once a pilot succeeds, you face the build-vs-buy decision. This is where many companies waste the most money.

When to Buy (Use Commercial AI Products)

- The use case is common across industries (chatbots, document processing, fraud detection)

- Speed to deployment matters more than customization

- You don't have a data advantage — your data isn't meaningfully different from competitors'

- The AI is not a competitive differentiator — it's operational infrastructure

Examples: Customer service AI (Intercom, Zendesk AI), expense management (Ramp, Brex), code assistance (GitHub Copilot), document processing (Docusign AI, Hyperscience).

When to Build (Develop Custom AI)

- You have proprietary data that creates a genuine advantage

- The use case is unique to your business or industry

- AI capability is a core competitive differentiator

- Commercial products can't meet your regulatory or integration requirements

Examples: Proprietary credit scoring (Upstart), personalized recommendation engines (Netflix, Spotify), specialized fraud detection for novel financial products.

The Hidden Third Option: Buy Then Customize

Most AI applications fall in the middle. You buy a commercial platform and customize it with your data. This captures 80% of custom AI value at 20% of the cost.

Example: Use a commercial LLM (Claude, GPT-4) via API, fine-tune or prompt-engineer it with your company's knowledge base, and deploy it through a commercial platform (Microsoft Copilot Studio, Amazon Bedrock). You get a custom AI assistant without building model infrastructure from scratch.

For a deeper framework on build-vs-buy decisions in technology, the Technology for Executives course covers vendor evaluation, TCO analysis, and strategic technology decisions.

Step 5: Get Your Data Ready

AI is only as good as the data it learns from. Most AI project failures are data failures, not model failures.

The Data Readiness Checklist

Availability: Do you have the data the model needs? If you want to predict customer churn, do you have historical data on which customers churned and why? If the data doesn't exist, you need to start collecting it — which means your AI timeline extends by 6-12 months.

Quality: Is the data accurate, complete, and consistent? A fraud detection model trained on mislabeled data (legitimate transactions marked as fraud, or vice versa) will be worse than no model at all.

Volume: Do you have enough data? Machine learning models typically need thousands to millions of examples. If you have 50 labeled examples, traditional ML won't work — though modern LLMs can handle small-data scenarios through few-shot learning and fine-tuning.

Accessibility: Can your AI team actually access the data? In many organizations, data sits in silos — the CRM team owns customer data, the finance team owns transaction data, the ops team owns operational data. Breaking down these silos is often the hardest part of AI readiness.

Governance: Do you have permission to use this data for AI? Privacy regulations (GDPR, CCPA), contractual restrictions, and ethical considerations all constrain what data you can feed into models. Establish data governance policies before you build, not after.

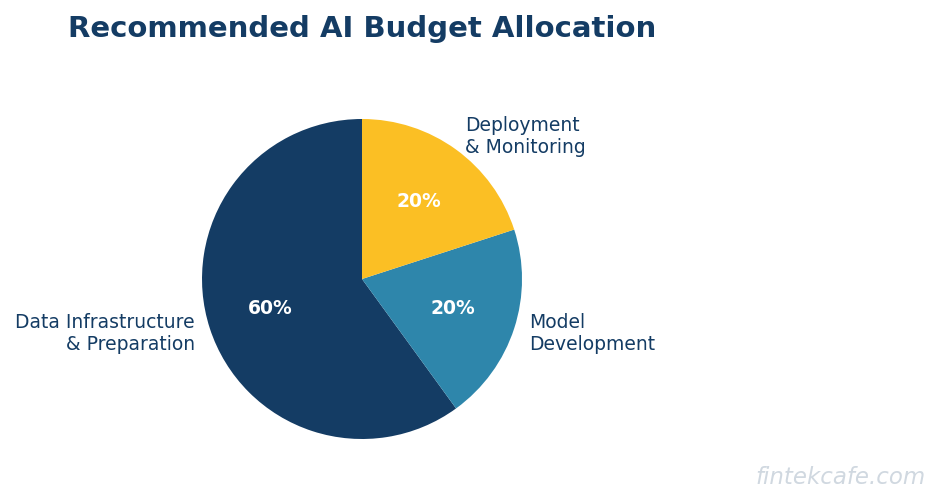

The Data Investment

Companies that succeed with AI invest disproportionately in data infrastructure — data warehouses, data quality tools, feature stores, data catalogs — rather than in models. The model is often the easy part. Getting clean, accessible, governed data to the model is the hard part.

Budget allocation for a successful AI program: 60% data infrastructure and preparation, 20% model development, 20% deployment and monitoring. Most companies invert this ratio, spending 60% on models and wondering why they fail.

Step 6: Build the Team

AI requires specific skills, but you don't need to hire a hundred data scientists. The team structure depends on your maturity level.

Level 1-2: The Starter Team (3-5 People)

- 1 AI/ML Lead: Technical leader who understands both the technology and the business context. This is the hardest hire — you need someone who can explain model limitations to executives and business requirements to engineers.

- 1-2 Data Engineers: Build and maintain the data pipelines that feed models. More important than data scientists in the early stages.

- 1 Data Scientist/ML Engineer: Builds and evaluates models.

- 1 Product Manager (part-time or full-time): Translates business requirements into AI project specifications. Manages stakeholders and defines success metrics.

Level 3: The Scaled Team (10-20 People)

Add:

- MLOps engineers to manage model deployment, monitoring, and lifecycle

- Specialized data scientists for different domains (NLP, computer vision, forecasting)

- AI ethics/governance specialist (can be part-time or shared)

- Analytics engineers to bridge the gap between data infrastructure and business intelligence

The Build-vs-Hire Decision

For Level 1-2 companies, consider starting with external AI consultants or managed services for the first pilot. This lets you learn what skills you actually need before making permanent hires. The top fintech companies have built large AI teams, but they started with small, focused groups.

Avoid the common mistake of hiring a team of data scientists without data engineers. Data scientists spend 80% of their time cleaning and preparing data if there's no data engineering support — an expensive misallocation of talent.

Step 7: Establish Governance

AI governance isn't bureaucracy — it's the framework that lets you deploy AI confidently and at scale. Without governance, every AI deployment is a liability waiting to happen.

The Minimum Viable Governance Framework

Model inventory. Maintain a registry of every AI model in production: what it does, what data it uses, who owns it, when it was last evaluated, and what its known limitations are.

Risk tiering. Not every model needs the same oversight. A model that recommends blog posts needs less governance than a model that approves credit applications. Tier your models by impact:

| Tier | Impact | Examples | Governance |

|---|---|---|---|

| 1 | Low | Content recommendations, internal search | Annual review, basic monitoring |

| 2 | Medium | Customer segmentation, inventory forecasting | Quarterly review, bias testing, performance monitoring |

| 3 | High | Credit decisions, fraud detection, pricing | Monthly review, regulatory compliance, explainability requirements, human-in-the-loop |

Bias testing. Before deployment and on an ongoing basis, test models for discriminatory outcomes across protected categories (race, gender, age). The EU AI Act requires this for high-risk applications, and the CFPB has enforcement authority over AI-driven credit decisions in the US.

Explainability. For Tier 3 models, you must be able to explain why the model made a specific decision — not just that it did. "The model denied the loan" is not acceptable. "The model identified high debt-to-income ratio and recent delinquency as the primary factors" is. Techniques like SHAP values and counterfactual explanations make this possible.

Incident response. Define what happens when a model fails — wrong predictions, biased outcomes, data leaks. Who is notified? What is the escalation path? How quickly can you disable the model and revert to a manual process?

For detailed coverage of AI risk management and regulatory compliance, the AI for Executives course dedicates full modules to governance, bias, and the EU AI Act.

Step 8: Measure and Scale

The hardest part of AI strategy isn't launching pilots — it's measuring results and deciding what to scale.

The AI ROI Framework

For each AI initiative, track three categories of value:

Direct financial impact:

- Revenue generated or protected (upsell recommendations, churn prevention, pricing optimization)

- Cost reduced (labor hours saved × fully loaded cost, error reduction × cost per error)

- Speed improvement (processing time reduction × value of faster execution)

Indirect business impact:

- Customer satisfaction improvement (NPS, CSAT scores in AI-affected interactions)

- Employee satisfaction (reduced repetitive work, faster access to information)

- Risk reduction (fewer compliance violations, faster fraud detection)

Strategic value:

- Data asset creation (does this model generate data that makes future models better?)

- Competitive positioning (does this capability differentiate you in the market?)

- Organizational learning (is the team building skills that compound over time?)

The Scaling Decision

Scale an AI initiative when:

- Pilot results meet or exceed the target metric

- The cost of scaling is justified by projected ROI at full deployment

- The data pipeline and infrastructure can handle production volume

- Users have adopted the tool and provide positive feedback

- Governance requirements are met (bias testing, explainability, monitoring)

Kill an AI initiative when:

- Pilot results are below the minimum viable improvement

- The data quality problems are structural (would require rebuilding core systems)

- User adoption is low despite training and iteration

- The competitive landscape has changed (a commercial product now does this better and cheaper)

The Scaling Playbook

- Expand within the same use case. If a fraud detection model works for credit card transactions, extend it to wire transfers and ACH.

- Replicate across business units. If customer churn prediction works in one product line, adapt it for others.

- Build shared infrastructure. As you scale multiple models, shared data pipelines, feature stores, model serving infrastructure, and monitoring tools reduce the marginal cost of each new model.

- Establish a center of excellence. A cross-functional team that provides AI expertise to business units, maintains standards, and shares learnings. This prevents every business unit from reinventing the wheel.

Common Mistakes and How to Avoid Them

Mistake 1: Strategy by Imitation

"Competitor X launched an AI chatbot, so we need one too." Your competitor's use case may not be your best use case. Start from your own business problems, not from press releases.

Mistake 2: Hiring Before You Have Work

Companies hire data science teams, give them a budget, and say "find AI opportunities." This produces science projects, not business results. Define the use cases first, then hire the skills to execute them.

Mistake 3: Expecting Perfection

AI models are probabilistic — they'll be wrong some percentage of the time. The question isn't "is this perfect?" but "is this better than the current process?" A fraud model that catches 85% of fraud while flagging 2% of legitimate transactions is vastly better than the manual review that catches 40% of fraud. But executives who expect 100% accuracy will kill viable projects.

Mistake 4: Ignoring Change Management

AI changes how people work. A claims adjuster whose workflow changes because an AI now triages cases needs training, clear communication about how their role evolves, and involvement in the design process. Technology adoption without change management produces expensive tools that nobody uses.

Mistake 5: No Feedback Loop

Models degrade over time as the world changes. A fraud model trained on 2024 data may miss 2026 fraud patterns. A demand forecasting model trained before a pandemic can't predict post-pandemic behavior. Build monitoring and retraining into every deployment — not as an afterthought, but as a core requirement.

Key Takeaways

- Start with business problems, not technology. The best AI strategy begins with a clear-eyed audit of where your business loses money, wastes time, or misses opportunities — then evaluates which of those problems AI can actually solve.

- Assess your maturity honestly. A Level 1 company needs a single pilot, not a 50-page AI strategy document. Match your ambition to your readiness.

- Prioritize ruthlessly. Use the impact-feasibility matrix. Pursue 2-3 initiatives, not twelve. Concentrated effort beats distributed experimentation.

- Run structured 90-day pilots with kill criteria. Define success before you start. Kill projects that don't meet minimum thresholds. No pilot purgatory.

- Invest 60% of your budget in data, not models. Data infrastructure and quality determine AI success more than algorithm selection. If your data isn't ready, your models won't work.

- Governance is an enabler, not a blocker. Model inventory, risk tiering, bias testing, and explainability let you deploy AI at scale with confidence.

- Measure ROI across direct, indirect, and strategic dimensions. Not every AI benefit shows up on the P&L in quarter one. But every AI initiative should have measurable outcomes.

FAQ

How much should we budget for an AI strategy?

Budget depends on maturity and ambition, but here are benchmarks. For a Level 1 company running its first pilot: $100,000-300,000 over 6 months (consulting, tooling, and a small team's time). For a Level 2 company scaling from pilots to production: $500,000-2 million annually (dedicated team, infrastructure, vendor contracts). For Level 3+ companies running AI at scale: 3-7% of IT budget, or $2-20 million annually depending on company size. These are fully loaded costs including personnel, infrastructure, vendor licenses, and data preparation. The most common mistake is underbudgeting data infrastructure — plan for 60% of your AI budget going to data before any model development starts.

Do we need to hire a Chief AI Officer?

Not at Level 1 or 2. A dedicated AI leader (VP or Director level) who reports to the CTO or COO is sufficient until you have multiple AI models in production. A Chief AI Officer makes sense at Level 3+ when AI decisions affect corporate strategy, require board-level governance, and span multiple business units. What you do need from Day 1 is executive sponsorship — a C-suite leader who owns the AI strategy, removes organizational blockers, and holds teams accountable for results. Without sponsorship, AI initiatives get deprioritized whenever budgets tighten.

How long does it take to see ROI from AI?

For well-scoped pilots using commercial AI tools: 3-6 months to measurable results. For custom AI models using your own data: 6-12 months from project start to production, with ROI demonstrable within the first quarter of production deployment. For organization-wide AI transformation: 18-36 months to material impact on business metrics. The key accelerator is starting with use cases that have clear baselines and measurable outcomes. "Improve customer experience with AI" takes years to measure. "Reduce claims processing time from 14 days to 3 days using AI triage" shows ROI within months. Companies that report the fastest AI ROI are those that chose narrow, measurable first use cases rather than ambitious transformation programs.

What if we don't have enough data?

You have more options than you think. Modern large language models (Claude, GPT-4) require no training data — they work out of the box for text analysis, summarization, classification, and generation. You can deploy an AI assistant, document processor, or research tool tomorrow with zero proprietary data. For traditional ML use cases that require training data, consider: synthetic data generation (creating artificial training data that mimics real patterns), transfer learning (starting from a pre-trained model and adapting it with limited data), and few-shot learning (modern LLMs can learn new tasks from just a few examples). If your data gap is fundamental — you simply don't collect the information you'd need — then the first step in your AI strategy is a data collection initiative, not an AI pilot. Budget 6-12 months of data collection before expecting model results.

How do we handle AI and regulation?

The regulatory landscape is evolving fast. The EU AI Act (effective 2026) classifies AI systems by risk level and imposes requirements for high-risk applications — including transparency, bias testing, human oversight, and documentation. In the US, the CFPB has enforcement authority over AI-driven financial decisions (credit, insurance, banking), and the FTC has taken action against deceptive AI practices. For any AI system that affects consumers (pricing, credit, insurance, hiring), build regulatory compliance into the design from Day 1 — not as a post-deployment retrofit. Specifically: maintain documentation of training data and model decisions, implement bias testing across protected categories, ensure human oversight for high-stakes decisions, and plan for the right to explanation (customers asking "why was I denied?"). The AI for Executives course covers the EU AI Act, SR 11-7, and practical compliance frameworks in detail.