Regulatory Risk in AI Deployment: What Leaders Underestimate

In 2023, the CFPB sent a clear message to every financial institution using AI for credit decisions: your algorithm is not above the law. Circular 2022-03 stated explicitly that complex AI models are not exempt from adverse action notice requirements. Lenders must explain denials — in plain language, to real people — regardless of how sophisticated the underlying model is. Many banks couldn't do it. They had deployed black-box models they couldn't interpret, let alone explain to a regulator. The Apple Card gender bias investigation by the New York Department of Financial Services in 2019 was an early warning. Most executives filed it under "interesting edge case." It wasn't an edge case. It was a preview.

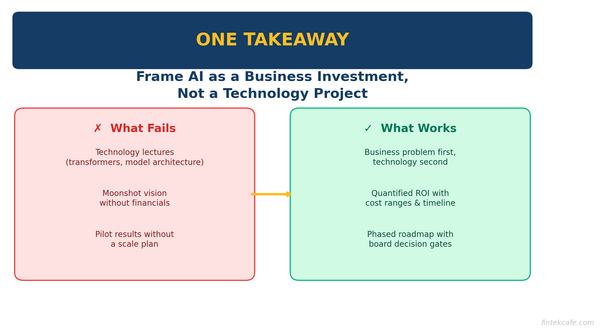

The central problem is not that AI is difficult to regulate. It's that most organizations are treating regulatory risk as a compliance checkbox when it is a strategic variable that will determine competitive outcomes. Companies that get this right will move faster, deploy with more confidence, and avoid the remediation costs that are quietly crippling their competitors. Companies that get it wrong will face fines, forced product withdrawals, and — in regulated industries — regulatory consent orders that constrain their entire business.

The Regulatory Landscape Is More Complex Than You Think

The first mistake executives make is assuming there is a single AI law to comply with. There isn't. There's a patchwork — and it's growing.

The EU AI Act is the most significant binding AI-specific legislation in force. It uses a risk-tiered approach: prohibited AI systems at the top, high-risk systems subject to strict requirements, and lower-risk systems subject to transparency obligations. What most US executives miss is Article 3 and the territorial scope: if your AI system affects EU citizens, you are subject to the EU AI Act regardless of where your company is headquartered. A US fintech deploying a credit-scoring model to European customers is operating under EU AI Act obligations right now. That's not hypothetical. That's the law.

Article 6 of the EU AI Act defines high-risk AI systems. Credit scoring, employment screening, access to essential services, law enforcement — these are the categories. If your system falls into one of them, you face conformity assessments, technical documentation requirements, bias monitoring, and human oversight obligations. The fines for non-compliance are not symbolic: up to €30 million or 6% of global annual turnover for prohibited AI systems, and up to €20 million or 4% for high-risk violations.

The United States has no equivalent federal AI law. What it has is sector-specific regulation applied to AI systems by existing agencies — and those agencies are increasingly aggressive. The CFPB, OCC, Federal Reserve, and FDIC all have supervisory authority over AI systems deployed by the institutions they regulate. In healthcare, the FDA applies its medical device framework to AI-based software. In employment, the EEOC has made clear that algorithmic hiring tools are subject to Title VII. The regulatory exposure is real; it's just distributed across agencies rather than consolidated in a single statute.

The UK has taken a principles-based approach — no single AI law, but existing regulators applying their frameworks to AI. China has enacted sector-specific rules, including regulations on recommendation algorithms and generative AI. The strategic implication is this: a global company deploying AI faces a mosaic of obligations with no single compliance path. GDPR Article 22, which restricts solely automated decision-making that produces legal or similarly significant effects, has been in force since 2018 and is actively enforced. Organizations that haven't mapped their AI systems against Article 22 are already out of compliance in Europe.

The Sector-Specific Trap

Generalizations about AI regulation are dangerous. The risk profile of a customer service chatbot is categorically different from a credit underwriting model or a clinical decision support tool. Executives who manage AI risk at the enterprise level without understanding sector-specific requirements are operating blind.

Financial Services carries the densest regulatory burden. SR 11-7, issued by the Federal Reserve in 2011, established model risk management requirements for banks. It was written for statistical models. Regulators are applying it to large language models and machine learning systems right now — the framework hasn't changed, but the technology has. SR 11-7 requires model validation, documentation of model development, and ongoing performance monitoring. Banks that deployed AI systems without SR 11-7-compliant documentation are exposed.

The Equal Credit Opportunity Act and Regulation B create a harder constraint: adverse action notices. If you deny credit, you must explain why — in specific, actionable terms. The CFPB's Circular 2022-03 made explicit that ECOA applies regardless of model complexity. A gradient boosting model with 300 features does not get an exemption. If you can't extract the top reasons for a denial and communicate them in plain language, you cannot legally deploy that model for credit decisions in the United States. The Apple Card situation is instructive: when David Heinemeier Hansson publicly reported that he received twenty times the credit limit of his wife despite joint finances, the New York DFS launched an investigation. Goldman Sachs could not fully explain the disparity. That's the ECOA problem made concrete.

Healthcare presents a different kind of risk. The FDA regulates AI as software as a medical device (SaMD) when it meets the definition of a medical device — and that definition is broad. Clinical decision support tools that go beyond displaying information and instead influence clinical decisions fall into FDA oversight. As of 2024, the FDA has cleared over 500 AI/ML-based medical devices. The pipeline of pending clearances is substantial. The risk for companies building "decision support" tools is reclassification: tools designed to stay below the FDA threshold are being reviewed against a stricter standard when they fail. HIPAA adds privacy obligations on top of device requirements. The EU MDR applies to devices used in European clinical settings. The regulatory surface area for a healthcare AI company is enormous.

Defense and dual-use applications represent an emerging and underappreciated category. ITAR controls the export of defense-related technology and technical data. AI models trained on export-controlled data, or designed for applications with military utility, may be subject to ITAR restrictions on who can access them and under what conditions. Export control rules are being actively updated to address AI, including rules governing access to advanced chips and model weights. Commercial companies building general-purpose AI with potential dual-use applications are not immune. The EO on AI in national security contexts has expanded the regulatory surface further.

The Compliance Cost Curve

The single biggest financial error organizations make around AI regulation is underestimating compliance costs — and underestimating them at precisely the wrong moment in the development lifecycle.

Compliance costs for AI systems are not linear. They grow non-linearly with system complexity and deployment scale, and — critically — they grow exponentially when addressed after deployment rather than before. The companies that discover this truth through a regulatory examination rather than through proactive planning pay a severe penalty.

Before deployment, compliance costs are manageable. Technical documentation, bias testing, explainability validation, and human oversight design are all cheaper when built into the system from the start. A bias audit during development costs a fraction of what it costs when ordered by a regulator post-incident. Explainability architecture built in at the model selection stage is qualitatively different from attempting to reverse-engineer explanations for a deployed black-box model.

At deployment, the requirements intensify. The EU AI Act mandates conformity assessments for high-risk systems. These are formal processes — not internal reviews. For financial institutions, SR 11-7 requires independent model validation before production deployment. Adverse action procedures must be designed, tested, and documented. Audit trail infrastructure must be in place before the first decision is made. None of this is free, and none of it can be fully retrofitted after the fact.

Post-deployment, the costs become operational. Ongoing model monitoring, drift detection, incident reporting, and regulatory examination preparation are recurring obligations. The OCC, CFPB, and Federal Reserve examine banks' AI systems. Being unprepared for examination costs money in two ways: direct remediation and indirect examination findings that constrain operations. Companies that build compliance in from the start spend approximately 10-20% more in the development phase. The evidence from regulatory enforcement actions suggests they avoid 3-5x that cost when regulators eventually arrive.

The EU AI Act fines — up to 6% of global turnover for prohibited systems — are the headline number. The real cost is often the remediation: pulling a deployed product, retraining a model, rebuilding documentation, and managing the reputational fallout. That's where companies actually lose.

Build vs. Buy: The Regulatory Lens

The build-vs-buy decision for AI systems is usually framed around cost, speed to market, technical capability, and vendor lock-in. These are real considerations. The one that organizations consistently underweight is regulatory liability allocation.

When you build, you own the risk entirely. Your model, your documentation, your examination exposure. This is not entirely a disadvantage. You control the explainability architecture, the audit trail, the monitoring infrastructure. You can design the system to be SR 11-7 compliant or ECOA-ready from the start. The cost is higher upfront; the regulatory control is superior.

When you buy, you are still the regulated entity. This point cannot be overstated. The OCC's third-party risk management guidance (OCC Bulletin 2023-17) is explicit: banks are responsible for the risks posed by third-party models they deploy. The vendor is not your regulatory shield. The EU AI Act codifies the same principle — deployers of high-risk AI systems carry compliance obligations even when they did not build the system. Deploying a vendor's credit model means you are responsible for validating it under SR 11-7, documenting it, testing it for bias under ECOA, and explaining its decisions to regulators. The vendor's documentation, if it exists, is a starting point. It is not a substitute for your own.

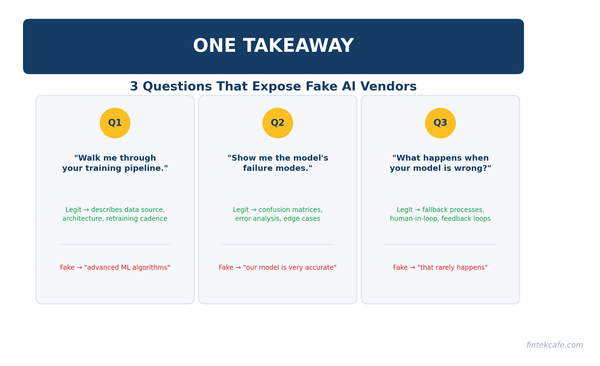

The correct question is not "should we build or buy?" It is "can we explain this model's decisions to a regulator, and do we have the documentation to prove it?" If the answer is no — and if the vendor cannot provide what you need — that is a walk-away condition. Multiple banks have made exactly this calculation: a vendor's model produced superior predictive performance, and the bank chose a less accurate but fully explainable alternative. That is the regulatory lens working correctly. In the long run, the explainable model that survives examination creates more value than the accurate model that triggers enforcement.

What Leaders Consistently Underestimate

The extraterritorial reach of the EU AI Act. US executives routinely assume European regulation is a European problem. The EU AI Act reaches any AI system that affects EU users — regardless of where the deploying company is based. A US company with European customers using an AI-powered service is within scope. Most have not mapped their AI inventory against this exposure.

The speed of regulatory evolution. SR 11-7 was written in 2011 for traditional statistical models. Regulators are applying it to LLMs today without waiting for updated guidance. The CFPB's Circular 2022-03 was issued without Congressional action. Regulatory interpretation moves faster than rulemaking. Companies that are waiting for the regulatory picture to "clarify" before acting are accumulating exposure while they wait. Regulators do not issue warnings before examinations.

The vendor defense does not work. Regulated entities are responsible for the AI systems they deploy, full stop. OCC Bulletin 2023-17, the EU AI Act deployer obligations, and every federal financial regulator's third-party risk management guidance converge on this point. "Our vendor told us the model was compliant" is not a defense that has ever satisfied a regulatory examiner. Due diligence on vendor AI systems is a legal obligation, not a best practice.

Bias testing is not optional. ECOA, the Fair Housing Act, the Fair Credit Reporting Act, and Title VII create legal exposure for discriminatory algorithmic systems. The CFPB and DOJ are actively investigating and pursuing cases. The Equal Employment Opportunity Commission has issued technical assistance documents on AI in hiring. The legal standard is disparate impact — a model can be discriminatory without any discriminatory intent. Testing for bias is a legal requirement, not a voluntary quality assurance step. Organizations that skip it are not managing risk; they are deferring it.

Model governance is a board-level issue. The OCC, Federal Reserve, and FDIC have all issued guidance making model risk governance a board-level responsibility. Directors who treat AI risk as a CRO or CTO issue — something technical, something that goes in a committee report — are misreading the regulatory signal. The signal is that boards are expected to understand the AI risk profile of their institutions. Directors who cannot demonstrate that understanding in an examination create personal exposure for themselves, not just institutional exposure for the bank.

A Framework for AI Regulatory Risk Assessment

Frameworks are only useful if they drive action. This one is designed to be completed in a working session, not a six-month consulting engagement.

Step 1: Map Your AI Inventory. This sounds obvious. Most large organizations cannot do it. AI systems proliferate across business units, often deployed by teams that do not think of themselves as AI teams. The inventory must include vendor models, internally developed models, and AI features embedded in third-party software. You cannot manage what you cannot see.

Step 2: Apply the Risk Tier. Use the EU AI Act's categories as a working framework regardless of your geographic scope — they are a useful proxy for regulatory intensity globally. Ask: does this system make consequential decisions about individuals' access to credit, employment, healthcare, or safety? If yes, treat it as high-risk. High-risk systems require documentation, bias testing, explainability, and human oversight design. No exceptions.

Step 3: Assess Regulatory Jurisdiction. For each system in your inventory, list the applicable regulations. For a US bank using AI in credit underwriting: SR 11-7, ECOA/Regulation B, FCRA, CFPB guidance. For a healthcare AI company: FDA SaMD framework, HIPAA, state regulations. For any company with EU users: GDPR Article 22, EU AI Act. If you cannot complete this list for a given system, that is the first gap.

Step 4: Gap Analysis. For each applicable regulation, identify what it requires and what you have today. SR 11-7 requires independent model validation — do you have it? ECOA requires adverse action reason codes — can your model produce them? EU AI Act requires technical documentation — does it exist? The gap list is your regulatory risk register.

Step 5: Prioritize by Probability Times Impact. Not all gaps carry equal risk. A CFPB enforcement action for discriminatory credit decisions is high probability and high impact — the CFPB is actively pursuing these cases, and the reputational and financial consequences are severe. A theoretical EU AI Act fine for a product with minimal EU exposure is lower priority. Rank the gaps. Address the highest-priority items first. Don't let the theoretical crowd out the immediate.

Key Takeaways

- The EU AI Act has extraterritorial reach. If your AI affects EU users, you are subject to it — regardless of where you are headquartered. Most US companies have not assessed this exposure.

- The "vendor defense" does not exist. OCC Bulletin 2023-17 and EU AI Act deployer obligations make clear: you are responsible for the AI systems you deploy, whether you built them or bought them.

- Compliance costs are non-linear. Building compliance into AI systems from the start costs 10-20% more upfront and avoids 3-5x that cost in remediation, fines, and forced product withdrawal.

- Adverse action explainability is a hard legal requirement in US credit markets. CFPB Circular 2022-03 removed any ambiguity. Black-box credit models that cannot explain individual decisions are illegal in their current form.

- Bias testing is a legal obligation, not a quality assurance step. ECOA and Title VII create disparate impact liability regardless of intent. Active CFPB and DOJ enforcement makes this a live risk, not a theoretical one.

- Model governance is a board-level issue. Federal financial regulators have made this explicit. Directors who cannot demonstrate understanding of their institution's AI risk profile are creating personal liability exposure.