What is AI in Fintech? A Plain-English Guide

What is AI in Fintech? A Plain-English Guide

You probably interacted with AI in financial services before you finished your first cup of coffee today. Your bank flagged a transaction overnight and sent you a text. Your credit card app categorized last night's dinner as "Restaurants" without you touching anything. A savings app nudged you because your balance hit a threshold. All of that was AI — not a human analyst, not a script someone wrote in 2005, but a machine learning model making real-time decisions about your money.

Yet most people in finance — including people who work in finance — have only the vaguest sense of what that actually means. "AI" has become a word that covers everything from a simple rule to a billion-parameter neural network, and the gap between the marketing pitch and the technical reality is enormous. If you're a career switcher trying to break into fintech, a junior analyst wondering why your bank bought some AI platform, or just someone who wants to understand the industry they're working in, this article is for you.

We're going to strip away the hype. No sci-fi. No "robots taking over." Just a clear explanation of what AI does in financial services today, how it got there, and why it matters for your career.

What AI Actually Does (Not What Sci-Fi Says It Does)

The AI in movies is sentient. It has goals, desires, and occasionally wants to destroy humanity. The AI in your bank is doing something far more mundane: it is looking at a large pile of historical data and making probabilistic predictions about new situations.

That's it. That's the core of it.

When your bank's fraud detection system evaluates a transaction, it is not "thinking." It is doing extremely fast pattern matching: given everything we know about how this customer normally behaves, and given everything we've seen about how fraudulent transactions tend to look, what is the probability that this particular transaction is fraud? If that probability exceeds a threshold, the transaction gets flagged or blocked. No intuition, no gut feeling — just math, data, and probability at massive scale.

In financial services, almost every AI application is answering one of three fundamental questions:

- Is this suspicious? (Fraud detection, AML transaction monitoring, identity verification)

- Will this person or business repay / behave as expected? (Credit underwriting, insurance pricing, churn prediction)

- What should we offer or suggest to this person right now? (Product recommendations, personalized offers, financial planning)

The tools used to answer those questions have become dramatically more sophisticated over the past 20 years — but the questions themselves haven't changed. What has changed is the speed, accuracy, and scale at which they can be answered.

One more thing worth saying clearly: AI is not magic. It requires data (lots of it, and good quality). It requires human decisions about what to optimize for. It can be wrong — sometimes systematically, in ways that cause real harm. We'll get to that. But the starting point is understanding that AI is a tool for pattern recognition and prediction, not an oracle.

A Brief History: How We Got Here

Rule-Based Systems (1980s–2000s)

Early fraud detection was logic written by humans. A compliance officer would sit down and write rules: "If a transaction is over $10,000 AND the customer has never transacted in this country, flag it." These systems were transparent and easy to audit — you could always explain exactly why a transaction was flagged — but they were brittle. Fraudsters learned the rules and worked around them. And because the rules were written by people, they couldn't keep up with the constantly changing patterns of financial crime.

False positive rates were brutal. Banks were blocking legitimate transactions constantly, which frustrated customers and cost money in manual review.

Machine Learning (2010s)

The shift to machine learning changed the equation. Instead of engineers writing rules, you train a model on millions of labeled examples — past transactions where you already know the outcome (fraud or not fraud) — and let the algorithm find the patterns. The model might discover that a combination of 50 variables, weighted in a specific way, predicts fraud better than any rule a human would write. Many of those variable relationships are not intuitive. That's precisely the point.

The tradeoff: these models are harder to explain. "The algorithm flagged it" is not a satisfying answer for a customer whose legitimate transaction was declined, and regulators have real concerns about this, which we'll come back to.

Deep Learning (2015–2020)

Deep learning added more layers to the mathematical models, enabling AI to handle more complex data types. Suddenly computers could recognize handwriting on checks well enough to automate deposit processing. Natural language processing (NLP) improved enough to analyze customer service transcripts, legal documents, and earnings calls. Anomaly detection in transaction networks became more sophisticated.

This era produced the behavioral AI used in fraud detection at major banks today — systems that build a model of each individual customer's spending habits and flag deviations, rather than applying the same rules to everyone.

Large Language Models and Generative AI (2022–Present)

This is where we are now. The release of ChatGPT in late 2022 accelerated financial services firms' interest in large language models (LLMs) — the same underlying technology. LLMs are particularly good at processing unstructured text: contracts, regulatory filings, customer emails, support tickets, financial news. They're being used to automate document review, power more capable customer chatbots, and assist financial advisors with research and client communication. Morgan Stanley, JPMorgan, and Bloomberg all have active LLM deployments, and this is still very early days.

5 Everyday AI Examples in Financial Services

1. Fraud Alerts — Your Bank's AI Watching Every Transaction

You're in Lisbon on holiday. You buy lunch at a small café near the waterfront. Twenty seconds later, you get a text from your bank: "Did you make a purchase at Tasca do Chico for €18.50?"

No human reviewed that transaction. The bank's fraud detection AI — running on infrastructure like Mastercard's Decision Intelligence, which analyzes 75 billion transactions per year — evaluated that transaction in milliseconds. It considered dozens of signals simultaneously: you were last seen transacting in London three hours ago (impossible travel unless you flew, which you did, but did the model see your flight-related purchases?). You've never transacted in Portugal before. The merchant is a restaurant with a pattern of transaction sizes consistent with legitimate dining, which reduces suspicion. Net result: medium-probability fraud flag, insufficient to block but sufficient to alert.

What's remarkable is the scale. Chase processes over 15 billion transactions per year. No human fraud team can review individual transactions at that volume in real time — the bank would need to hire more fraud analysts than there are people in a mid-sized city. AI makes real-time transaction monitoring economically viable in a way that simply wasn't possible before.

The improvement in accuracy has been dramatic. AI-driven fraud detection has cut false positive rates significantly compared to rule-based systems, while catching more actual fraud. Better for customers (fewer legitimate transactions blocked), better for banks (less revenue lost to fraud), better for everyone except fraudsters.

2. Instant Credit Approval — Loan Decisions in Seconds

You apply for a credit card online. You fill out a form, submit it, and 45 seconds later you have an answer — approved, with a $12,000 credit limit. No waiting 7-10 business days for a loan officer to pull your file, assess your application against internal guidelines, and write up a decision.

Behind that 45-second answer is a credit scoring model that pulled your credit bureau data, ran it through a trained model, calculated a probability of default, compared that probability to the bank's risk appetite and pricing model, and generated a credit limit and interest rate that reflect your risk profile.

The traditional version of this — FICO scores — has been around since the 1980s and uses a relatively small number of variables (payment history, amounts owed, length of credit history, new credit, credit mix). FICO was already an early form of predictive modeling. What's changed is the sophistication of the models and the range of data they can use.

Companies like Upstart have pushed this further. Upstart's model uses over 1,600 variables, including educational background, employment history, and a range of behavioral signals, to score borrowers — particularly "thin-file" borrowers who have limited credit history and would score poorly or not at all on traditional FICO. The claim, validated by the CFPB in a study, is that this approach approves more borrowers at the same or lower default rates compared to traditional scoring. The AI finds patterns in the data that FICO misses.

3. Chatbot Support — Why Erica Isn't Just a Script

You open Bank of America's mobile app and type: "How much did I spend at restaurants last month?" Within two seconds, Erica — BofA's virtual financial assistant — gives you an exact figure, a breakdown by individual restaurant if you want it, and a comparison to last month.

By 2024, Erica had handled over 1.5 billion client interactions since its launch. That volume is only possible because Erica isn't a human and isn't a simple scripted chatbot.

Traditional chatbots — the kind you've probably encountered that are genuinely terrible — work on decision trees. They have a list of expected inputs and pre-written responses. If you ask something slightly outside the script, they fall apart. "What did I spend on restaurants?" might work; "what did I drop on food last month?" might not, because the keyword matching fails.

Modern financial AI assistants use Natural Language Processing (NLP) to understand intent, not just match keywords. The same underlying technology that powers Siri or Google Assistant, fine-tuned on banking-specific data, can understand that "food last month" and "restaurant spending in January" are asking the same question. They can handle follow-up questions that reference the previous answer, remember context within a conversation, and escalate to human agents when the query is genuinely complex.

The business case is straightforward: Erica handles millions of queries per day that would otherwise require human agents at $15-25 per interaction. That's hundreds of millions of dollars in cost savings annually, while also providing 24/7 availability that human teams can't match.

4. Robo-Advisors — A Portfolio Manager in an App

Five years ago, getting personalized investment advice meant meeting with a financial advisor who required a minimum account size — typically $250,000 or more — and charged 1% of assets annually. For most people under 40, this wasn't accessible.

Then you open Betterment. You answer five questions: What are you investing for? When will you need the money? How would you feel if your portfolio dropped 30% in a market crash? Based on your answers, the platform builds a diversified portfolio across a set of low-cost ETFs — a mix of stocks and bonds calibrated to your timeline and risk tolerance. It takes about 10 minutes, there's no minimum balance, and the fee is 0.25% annually.

The "AI" in robo-advisors is doing a few specific things:

Portfolio construction applies Modern Portfolio Theory — a mathematical framework for building portfolios that maximize expected return for a given level of risk — at a per-customer level, automatically.

Rebalancing monitors your portfolio allocation and automatically buys and sells to maintain your target allocation as markets move. A human advisor doing this manually for thousands of clients is impractical; software does it continuously.

Tax-loss harvesting — selling investments that are down to realize a tax loss that offsets gains elsewhere — is a strategy previously available only to wealthy clients because it required constant monitoring and execution. Robo-advisors automate this.

The result: over $1 trillion is now managed by robo-advisory platforms. This is one of the clearest examples of AI democratizing access to financial services — making capabilities available to ordinary investors that were previously reserved for the wealthy.

5. Personalized Offers — Why Your Banking App Seems to Read Your Mind

You've just booked flights to Tokyo. Three days later, you open your banking app and see a highlighted offer for a travel credit card with no foreign transaction fees and airport lounge access. You were already thinking about applying for one.

This is not coincidence. It is a recommendation system analyzing your transaction history — you booked flights, you bought travel insurance, you spent at a currency exchange — and inferring that you are in a life stage where a travel card is relevant. The timing of the offer is deliberate: behavioral models show that people are most likely to respond to financial product offers within a short window after a triggering event.

Companies like Personetics provide this technology to banks serving over 130 million customers globally. The models go beyond simple transaction triggers. They analyze spending trajectory (are you spending more or saving more?), life stage signals (did you recently buy baby products, suggesting a new child?), account behavior (did you just receive a large deposit, suggesting you might be interested in investment products?), and channel engagement (do you respond better to in-app messages or email?).

Done well, this is genuinely useful — getting a relevant offer at the right time is better than generic spam. Done poorly, it can feel intrusive. The line between "helpfully personalized" and "creepily surveillance-like" is one the industry is still navigating.

Why It Matters for Your Career and Business

If You Work in Financial Services

AI is reshaping roles at every level, not by eliminating jobs wholesale but by shifting what skills matter. Fraud analysts spend less time reviewing individual flagged transactions (the AI handles the high-volume, low-complexity cases) and more time investigating complex fraud rings that require judgment. Compliance officers use AI tools to do preliminary suspicious activity report (SAR) reviews, then apply their judgment to edge cases. Financial advisors using AI assistance can serve more clients and focus on planning conversations rather than administrative work.

The practical implication: data literacy is becoming a baseline skill. You don't need to train machine learning models. But you should be able to look at an AI system's outputs critically, understand what the model was optimized for, recognize when it might be wrong, and communicate about AI capabilities with technical teams. The people who get promoted in financial services over the next decade will be those who can bridge the gap between business problems and technical solutions.

If You're Building a Fintech Product

AI is no longer a differentiator in most fintech categories — it's table stakes. Credit underwriting without machine learning is slower and less accurate than Upstart's. Fraud detection without behavioral AI means higher loss rates than your competitors. The question is no longer "should we use AI?" but "which AI capabilities should we build in-house versus buy from vendors, and how do we build the data infrastructure to support them?"

The deeper strategic question is around data. AI is only as good as the data it trains on. Companies that have been systematically collecting and labeling high-quality data for years have a durable advantage. The time to build your data foundation is before you need it.

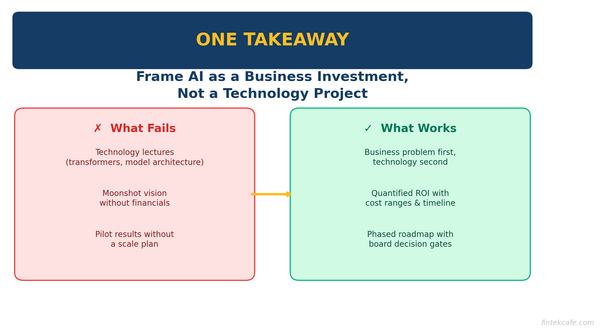

If You're a Manager or Executive

You don't need to understand how gradient boosting works. You do need to understand: what questions AI is well-suited to answer in your business, how to evaluate vendor claims (ask about model performance on your specific data, not benchmark datasets), what the regulatory environment looks like (the EU AI Act, CFPB guidance on algorithmic credit decisions, OCC model risk management guidelines), and how to build teams that deploy AI responsibly. The most expensive AI mistakes in financial services have come from executives who outsourced all the judgment to vendors and didn't ask hard questions.

The Limitations: What AI in Finance Gets Wrong

Skipping this section would be doing you a disservice. AI in financial services has real, documented failure modes.

Bias and historical discrimination. Credit scoring models trained on decades of historical lending data learn from a history that includes explicit and systemic discrimination. If certain ZIP codes, demographics, or types of employment were historically underserved, the model encodes that underservice as a risk signal. Studies have shown that AI-based credit systems can perpetuate or even amplify historical disparate impact, even when protected characteristics are not explicitly included as variables — because proxies for those characteristics (ZIP code, name, school attended) are often correlated. This is an active area of regulatory scrutiny.

Explainability requirements. Under the Equal Credit Opportunity Act and Fair Credit Reporting Act, lenders must provide specific, written reasons when they deny credit. "The model gave you a low score" is not compliant. This creates a genuine tension in deploying complex models: the more sophisticated the model, often the harder it is to explain any individual decision. Interpretable machine learning — building models that are accurate and explainable — is an active research and commercial challenge.

Tail risk and novel events. Models are trained on historical data. By definition, they've never seen a scenario that hasn't happened before. The 2008 financial crisis exposed the brittleness of models calibrated on a period of rising home prices. COVID-19 in 2020 broke consumer credit models trained before the pandemic — default rates didn't behave as predicted because of government stimulus and forbearance programs. When the world changes in novel ways, historical pattern matching fails.

Data quality problems. Every data scientist will tell you that 80% of their time is spent cleaning data, not building models. Financial data is notoriously messy: inconsistent formats across systems, errors in credit bureau data (studies suggest errors affect 25%+ of credit files), missing data for newer customers, behavioral shifts that make old data unrepresentative of current reality. The most sophisticated model on bad data produces bad outputs.

What's Next

The near-term frontier is generative AI entering higher-stakes financial applications. Morgan Stanley has deployed an OpenAI-powered assistant that gives its 16,000 financial advisors instant access to the firm's research library and internal documents — summarizing, comparing, and answering questions on demand. JPMorgan's COiN (Contract Intelligence) platform processes legal documents in seconds that previously took 360,000 lawyer-hours annually. The next wave goes further: autonomous AI agents that can execute multi-step financial planning workflows, hyper-personalization that adapts products in real time to individual customer circumstances, and AI-native challenger banks built around model-first architecture rather than legacy systems with AI bolted on. The financial services industry in 2030 will look substantially different from today — and the professionals who understand the underlying technology will be the ones building it.

Key Takeaways

- AI in financial services is pattern recognition and probability estimation at scale — not sentience, not magic, just sophisticated statistics on large datasets.

- The core questions AI answers in finance are: Is this fraudulent? Will this person repay? What should we recommend to this customer right now?

- AI evolved from hand-written rules (1980s) to machine learning (2010s) to deep learning to today's large language models, each step improving accuracy and capability.

- Fraud detection, instant credit decisions, banking chatbots, robo-advisors, and personalized offers are five AI applications you likely interact with already.

- AI is a competitive baseline in fintech — not using it means competing at a structural disadvantage in underwriting, fraud, and personalization.

- The limitations are real and matter: bias in training data, explainability requirements from regulators, brittleness during novel events, and data quality problems.

- Understanding how AI tools work — even at a conceptual level — makes you more effective in any finance role and positions you for where the industry is heading.

Continue Learning

Ready to go deeper? These articles in the FinTekCafe AI Track build on what you've learned here:

- Machine Learning Explained for Finance Professionals (Level 1) — The math behind credit scoring and fraud detection models, explained for non-data scientists.

- How AI is Changing Banking: 5 Things You'll Notice (Level 1) — A look at how the branch, the app, and the back office are all being reshaped.

- AI in Fintech: 10 Real Use Cases Making Money Today (Pro) — A deep dive into specific deployments at Stripe, JPMorgan, Klarna, and others, with revenue and cost metrics.