How to Run an Enterprise Software Evaluation in 30 Days

Most enterprise software evaluations take three to six months. Some take longer. By the time a decision is made, the original requirements have shifted, the evaluation team is exhausted, and the organization settles for whichever vendor survived the process rather than the one that best fits the need.

It does not have to be this way.

This guide gives you a structured 30-day framework for evaluating enterprise software — from requirements gathering to signed contract. It is written for the executive who approves the purchase, not the engineer who tests the features. You will learn how to compress the timeline without cutting corners, what questions to ask every vendor, how to spot red flags in demos, and how to negotiate a deal that protects your organization.

Why Most Evaluations Take Too Long

Enterprise software evaluations drag on for three predictable reasons.

No Clear Decision Criteria Upfront

Without agreed-upon criteria before the first vendor call, every stakeholder brings their own priorities. Sales wants CRM integration. Finance wants reporting flexibility. IT wants security certifications. Without a framework to weigh these against each other, every conversation reopens the same debates.

Too Many Vendors in the Mix

Some organizations invite eight or ten vendors to present. Each vendor gets a demo slot, a follow-up call, a reference check. The evaluation team spends weeks watching demonstrations of products that were never realistic contenders. Two or three finalists are enough. Five is too many.

No Decision Deadline

Without a firm deadline, evaluations expand to fill available time. New questions arise. Someone suggests looking at one more vendor. A stakeholder misses a demo and needs it rescheduled. A 30-day timeline with a firm decision date forces focus and prevents scope creep.

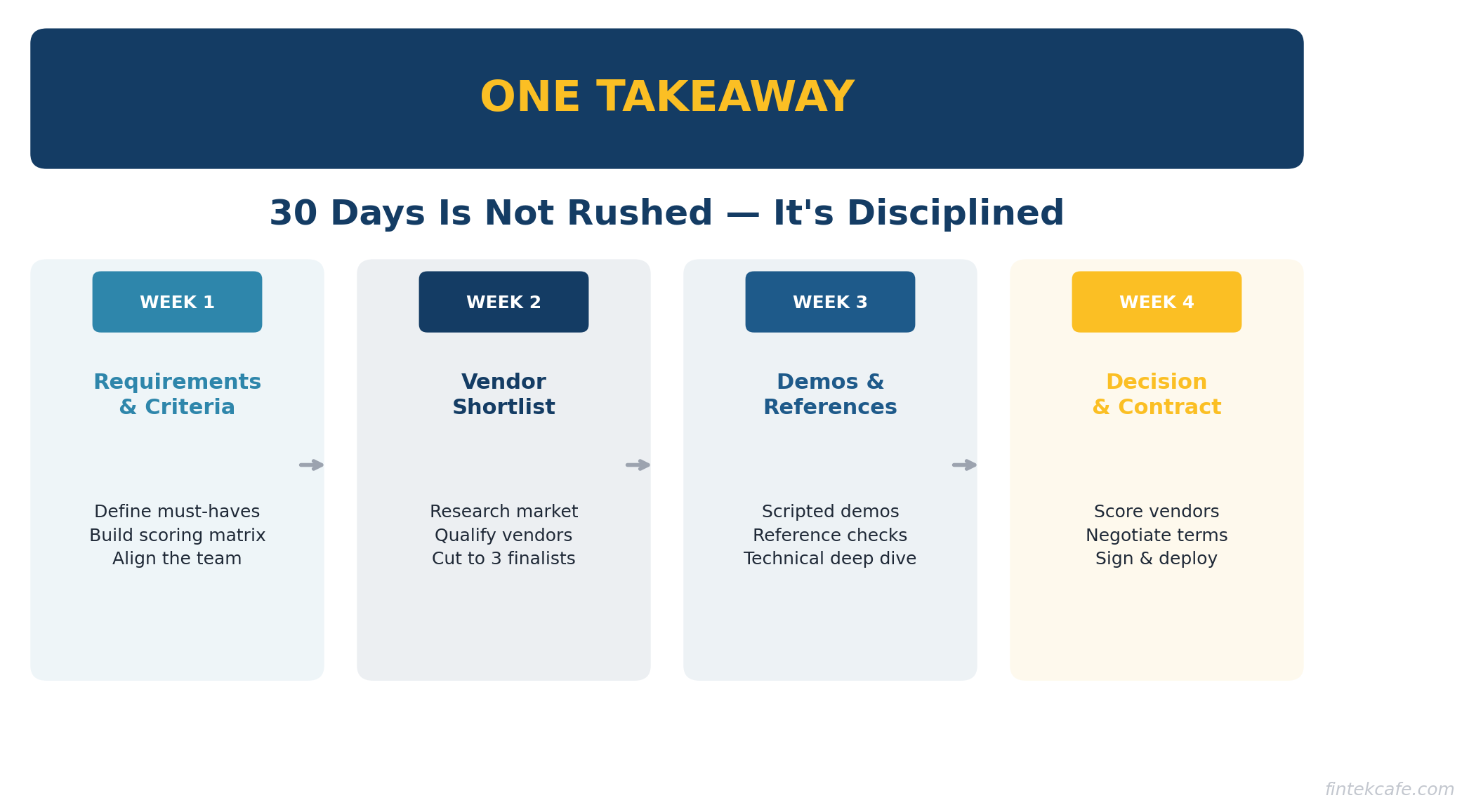

The 30-Day Framework

Week 1: Requirements and Criteria (Days 1-7)

This is the most important week. Get this right and the remaining three weeks run smoothly. Get it wrong and you will repeat this week at the end of the process when you realize the team never agreed on what matters.

Day 1-2: Assemble the Decision Team

Keep it small. You need five people maximum:

- Executive sponsor. The person who owns the budget and makes the final call. This is probably you.

- Business lead. The person who will use the software most or whose team will be most affected.

- Technical lead. Someone from IT or engineering who can evaluate security, integration, and infrastructure requirements.

- Finance representative. Someone who can evaluate total cost of ownership and contract terms.

- Project manager. Someone to keep the process on track, schedule meetings, and document decisions.

Do not add more people to be inclusive. Every additional person adds coordination overhead and slows decisions. Others can provide input through the business lead or technical lead.

Day 3-4: Define Requirements

Create two lists:

Must-haves: Requirements that are non-negotiable. If a vendor cannot meet these, they are eliminated regardless of everything else. Keep this list short — five to eight items. Examples: SOC 2 Type II certification, SSO support, API access, specific regulatory compliance.

Should-haves: Requirements that matter but are not dealbreakers. Rank these in priority order. Examples: mobile app, custom reporting, specific integrations, multilingual support.

The discipline is in the must-have list. Every requirement you add as a must-have narrows the field. Be honest about what is truly non-negotiable versus what is strongly preferred.

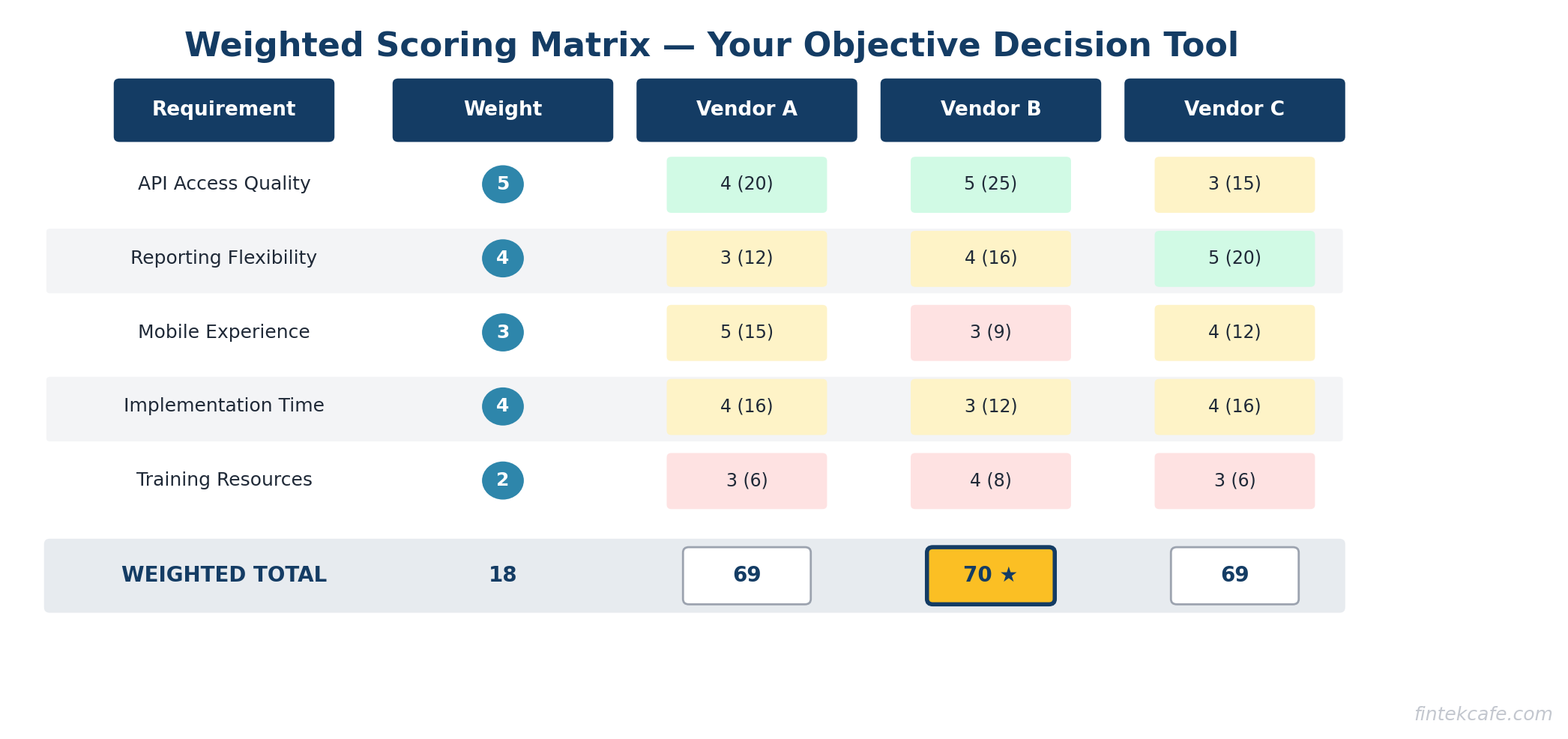

Day 5-7: Build the Scoring Matrix

Create a simple scoring matrix with your should-have requirements weighted by importance. Use a 1-5 scale. This becomes your objective comparison tool. Without it, the final decision devolves into subjective arguments.

| Requirement | Weight | Vendor A | Vendor B | Vendor C |

|---|---|---|---|---|

| API access quality | 5 | — | — | — |

| Reporting flexibility | 4 | — | — | — |

| Mobile experience | 3 | — | — | — |

| Implementation timeline | 4 | — | — | — |

| Training resources | 2 | — | — | — |

Share this matrix with the team. Get agreement before moving forward. This is your decision framework. If the team cannot agree on the matrix, you are not ready to evaluate vendors.

Week 2: Vendor Shortlist (Days 8-14)

Day 8-9: Research the Market

Start with analyst reports (Gartner Magic Quadrant, Forrester Wave, G2 Grid) to understand the landscape. These reports are imperfect but useful for identifying the serious contenders. Supplement with industry peer recommendations and online reviews from verified users.

Create a long list of six to eight potential vendors, then immediately cut it to three or four based on your must-have requirements. Check their websites, documentation, and pricing pages. Any vendor that clearly cannot meet your must-haves gets cut before you waste time on a call.

Day 10-11: Initial Vendor Conversations

Send each shortlisted vendor a brief document: your company size, industry, primary use case, must-have requirements, and evaluation timeline. Tell them you are making a decision by day 30. This does two things: it lets them self-select out if they are not a fit, and it signals that you are running a structured process.

Schedule a 30-minute introductory call with each vendor. The purpose is not a demo. It is a qualification conversation. Can they meet your must-haves? What is their pricing model? What does implementation look like? How long until you are live?

Day 12-14: Cut to Three Finalists

Based on the introductory calls, cut to three finalists. Inform the eliminated vendors promptly. Schedule demos with the three finalists for week three.

Three finalists is the right number. Two gives you insufficient comparison. Four or more creates evaluation fatigue and makes the decision harder without making it better.

Week 3: Demos and References (Days 15-21)

Day 15-17: Structured Demos

This is where evaluations go wrong. Most demos are vendor-controlled presentations that show the product at its best. You need to take control.

Send each vendor a demo script in advance. Tell them exactly what you want to see:

- Walk through your three most common workflows using the product.

- Show reporting and analytics capabilities using sample data similar to yours.

- Demonstrate the admin experience: user management, permissions, configuration.

- Show the integration points you care about most.

- Break something. Show what error handling and support escalation look like.

Give each vendor the same script. Same workflows, same scenarios. This makes comparison possible.

Schedule 90-minute demo slots with 30 minutes between them for the team to debrief and score. Never schedule all demos on the same day — your attention degrades after the second one.

Day 18-19: Reference Checks

Ask each vendor for three customer references. Then find two more on your own. Vendor-provided references are curated. Self-sourced references give you the real picture.

When talking to references, ask these questions:

- What was the implementation timeline, and did it match what the vendor promised?

- What has been the biggest surprise — positive or negative — since going live?

- How responsive is support when something breaks?

- If you were making this decision again, would you choose the same vendor?

- What would you negotiate differently in the contract?

The last two questions are the most revealing. Pay close attention to hesitation or qualified answers.

Day 20-21: Technical Deep Dive

Your technical lead needs dedicated time with each vendor's engineering or solutions team. This is not another demo. This is a working session covering:

- Security architecture and certifications

- Data handling, storage, and privacy practices

- API documentation and rate limits

- Integration architecture for your specific stack

- Disaster recovery and uptime guarantees

- Data export and portability

If a vendor resists a technical deep dive, that is a red flag. Serious vendors welcome technical scrutiny.

Week 4: Decision (Days 22-30)

Day 22-23: Complete the Scoring Matrix

Each decision team member fills in their scores independently. Then come together to review. Where scores diverge significantly, discuss why. The goal is not consensus on every line item — it is understanding why team members see things differently.

Calculate weighted totals. The vendor with the highest score is your frontrunner, but the numbers are a guide, not a verdict. If the scoring is close and the team has a strong instinct about one vendor, discuss that openly.

Day 24-25: Negotiate the Contract

Never accept the first price. Enterprise software pricing is almost always negotiable. Here are your leverage points:

- Multi-year commitment. Vendors will discount significantly for a two or three-year deal. Make sure the discount justifies the lock-in.

- Annual vs. monthly billing. Annual prepayment usually earns a 10-20% discount.

- End of quarter timing. If the vendor's quarter ends within your 30-day window, they have extra motivation to close.

- Competitive pressure. You have two other finalists. The vendor knows this. You do not need to be aggressive — just transparent that you are evaluating alternatives.

Key contract terms to negotiate beyond price:

- Implementation support included. Do not pay separately for basic setup.

- Price lock for renewal. Cap annual increases at a fixed percentage.

- Data portability clause. If you leave, you get your data in a usable format within 30 days.

- SLA with financial penalties. Uptime guarantees mean nothing without consequences for missing them.

- Exit clause. A reasonable termination provision if the vendor fails to meet agreed service levels.

Day 26-27: Final Due Diligence

Before signing, check three things:

- Vendor financial health. Are they profitable or well-funded? A startup with six months of runway is a risk for a three-year contract.

- Product roadmap alignment. Does their development direction match your future needs? Ask to see the roadmap and assess whether key features you will need are planned.

- Customer retention. What is their churn rate? High churn signals problems that references may not reveal.

Day 28-30: Make the Decision and Sign

Bring the scoring matrix, reference feedback, negotiated terms, and your recommendation to the executive sponsor (or the board, if the purchase requires board approval). Present it as a recommendation with supporting evidence, not an open-ended question.

Sign the contract. Inform the runners-up. Begin implementation planning.

The 10 Questions to Ask Every Vendor

Ask these in the introductory call or demo. The answers tell you more than any marketing material.

- What does your typical customer look like? If their typical customer is nothing like you, their product is optimized for someone else.

- What is your actual average implementation timeline? Not the best case. The average. For companies your size.

- What is included in the base price and what costs extra? Many vendors advertise a low base price and charge for essential features as add-ons.

- How many customers have left in the past 12 months, and why? If they refuse to answer, that is an answer.

- What does your support model look like? Response times, escalation paths, dedicated vs. shared support.

- What happens to my data if I cancel? Export format, timeline, and whether they delete your data after export.

- What is your uptime over the past 12 months? Ask for the actual number, not the SLA target.

- How do you handle breaking changes or major version upgrades? Will you be forced to migrate on their timeline?

- Who are your three largest competitors, and why should I choose you over them? This reveals how well they know the market and where they believe their genuine strengths lie.

- Can I speak to a customer who almost left but stayed? This reference will give you the most honest assessment of the vendor's willingness to resolve problems.

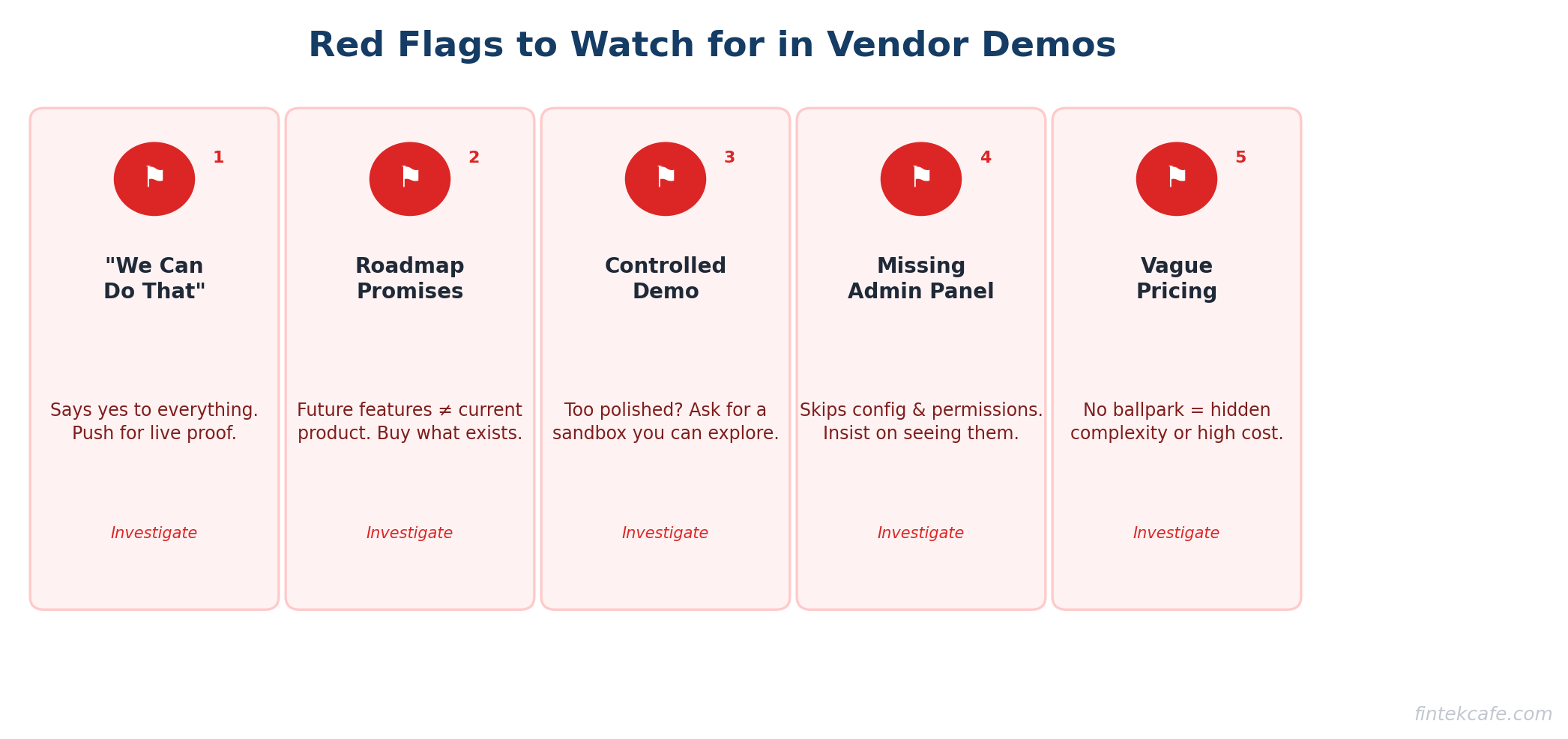

Red Flags in Demos

Watch for these during vendor presentations. Any one of them warrants deeper investigation.

The "We Can Do That" Vendor

Every question you ask gets a yes. Custom workflows? Yes. Complex integrations? Absolutely. Your obscure compliance requirement? No problem. Vendors who say yes to everything often deliver on very little. Push for specifics: "Show me how that works right now."

The Feature Roadmap Promise

"That is on our roadmap for Q3." Never buy software based on future features. Roadmaps change. Buy what exists today and treat future features as a bonus if they arrive.

The Controlled Demo Environment

The demo looks nothing like the actual product. Perfectly formatted data, no loading times, features that seem too polished. Ask to see a production instance or a sandbox you can explore yourself. If they refuse, ask why.

The Missing Admin Experience

The vendor shows the end-user experience beautifully but skips the admin panel. Configuration, user management, permissions, and reporting are where many products fall apart. Insist on seeing them.

The Vague Pricing

"It depends on your specific needs. Let us put together a custom quote." While some customization is normal, a vendor who cannot give you a ballpark range in the first conversation is hiding something — usually a high price or a complex pricing model designed to increase costs over time.

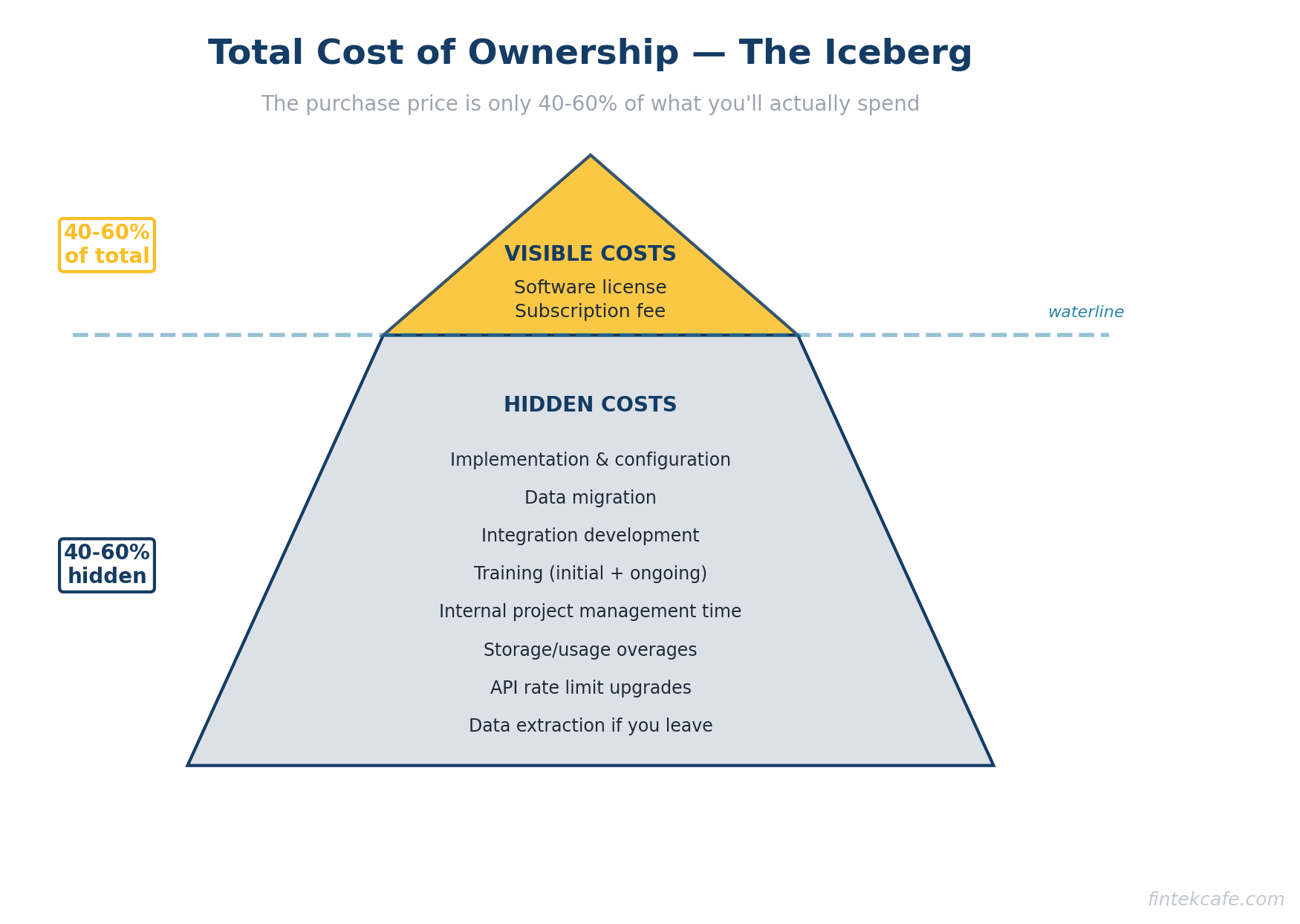

Total Cost of Ownership Checklist

The purchase price is 40-60% of what you will actually spend. Use this checklist to calculate true cost.

Year One Costs:

- Software licensing or subscription fees

- Implementation and configuration services

- Data migration from your current system

- Integration development (connecting to your existing tools)

- Training for administrators and end users

- Additional hardware or infrastructure (if applicable)

- Internal project management time (your team's hours)

- Consultant or contractor fees

Ongoing Annual Costs:

- Subscription renewal (check for annual increases)

- Premium support tier (if needed)

- Additional user licenses as your team grows

- Storage or usage overage charges

- Ongoing training for new employees

- Internal administration time

- Integration maintenance and updates

- Customization and configuration changes

Hidden Costs to Investigate:

- Cost to increase API rate limits

- Charges for additional environments (staging, testing)

- Premium features that should be standard

- Compliance or audit-related add-ons

- Cost of extracting your data if you leave

Add it all up for three years. Compare vendors on total three-year cost, not annual subscription price. The cheapest subscription often has the highest total cost.

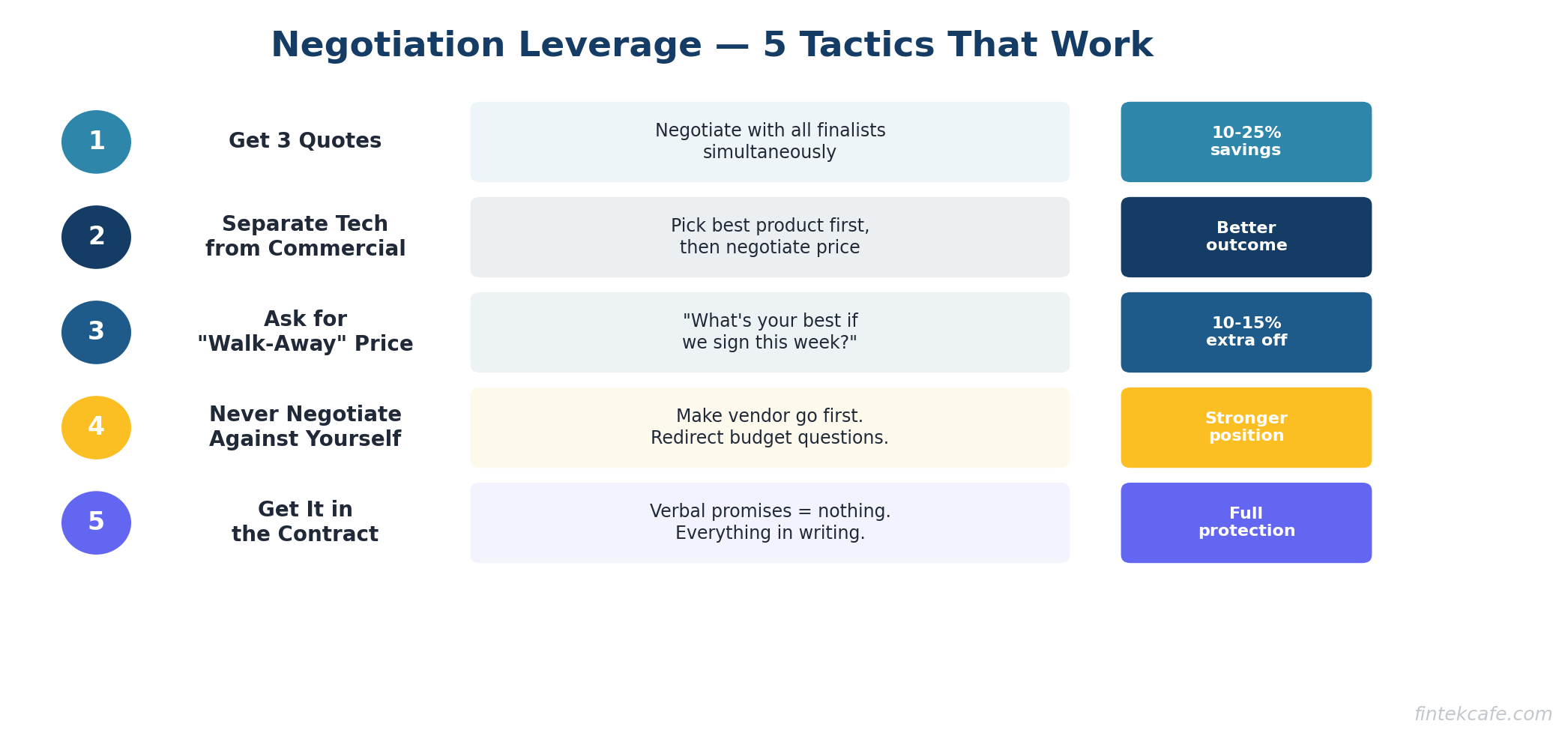

Negotiation Tactics That Work

Get Three Quotes

Even if you have a strong preference, negotiate with all three finalists simultaneously. This is not about playing vendors against each other. It is about understanding market pricing and having alternatives if negotiations stall.

Separate the Technical Decision from the Commercial Decision

Decide which vendor wins on merit first. Then negotiate price. If you negotiate while still deciding, vendors will use discounts to compensate for product weaknesses. Choose the best product, then get the best price on that product.

Ask for the "Walk-Away" Price

Tell your preferred vendor: "We want to move forward with you. What is the best price you can offer if we sign this week?" This signals commitment while creating urgency. Many vendors have authority to offer an additional 10-15% discount to close quickly.

Never Negotiate Against Yourself

Make the vendor go first on price. Then counter. If they ask "What is your budget?" redirect: "We want to understand your standard pricing first so we can evaluate the value." Your budget is your concern, not theirs.

Get Everything in the Contract

Verbal promises mean nothing once the contract is signed. Every commitment — implementation timeline, included support hours, feature delivery dates, performance guarantees — must be in the contract. If a vendor is reluctant to put a promise in writing, they are not confident they can deliver it.

Key Takeaways

- A 30-day evaluation is not rushed — it is disciplined. Most of the time in longer evaluations is wasted on unnecessary vendors, repeated meetings, and scope creep.

- Week 1 (requirements and scoring matrix) is the foundation. Skip it or rush it and the remaining three weeks fall apart.

- Limit your evaluation to three finalists. More than three creates diminishing returns and evaluation fatigue.

- Control the demo. Give vendors your script, not the other way around. Same scenarios for every vendor.

- Self-source references in addition to vendor-provided ones. Ask what they would negotiate differently.

- Calculate total cost of ownership over three years, not just annual subscription price. The cheapest vendor on paper often is not the cheapest in practice.

- Never buy based on roadmap features. If the product does not meet your needs today, move on.

Frequently Asked Questions

Can you really evaluate enterprise software in 30 days?

Yes, if you are disciplined about scope. The framework works for mid-market and enterprise purchases up to seven figures. For very large, highly customized platforms (ERP implementations, core banking systems), you may need 60-90 days, but the same structure applies — just stretch each phase.

What if stakeholders cannot align on requirements in week one?

This is a project management problem, not an evaluation problem. If your stakeholders cannot agree on what they need, adding more time will not help. Escalate to the executive sponsor to make priority calls. Requirements disagreements that persist past day seven signal organizational issues that need to be resolved before spending money on software.

Should I hire a consultant to run the evaluation?

For purchases over $500K or in domains where you lack expertise, a consultant can add value — particularly in contract negotiation and technical assessment. For most mid-market purchases, your internal team can run this framework effectively without outside help.

How do I handle internal politics when stakeholders prefer different vendors?

This is why the scoring matrix exists. It depersonalizes the decision. When someone advocates for a vendor, ask them to point to the scoring criteria that support their position. If their reasoning is "I have used this product before and I like it," that is valuable input but not a decision criterion.

What if the best product is also the most expensive?

Calculate the cost difference over three years and compare it to the value difference. If Vendor A is 20% more expensive but scores 40% higher on your weighted matrix, the premium is likely worth it. Present this analysis to the decision-maker with a clear recommendation.

Should I run a proof of concept before deciding?

For complex implementations, yes — but scope it tightly. A POC should take five to seven business days and test your two or three riskiest assumptions. Do not let a POC become an open-ended evaluation that adds weeks to the timeline.